Infographic

3 Key Findings from Jumio’s 2024 Online Identity Consumer Study

Charts

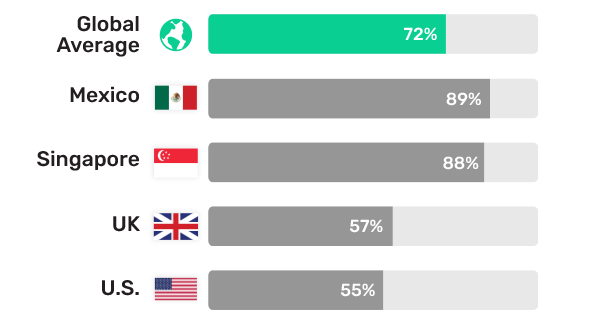

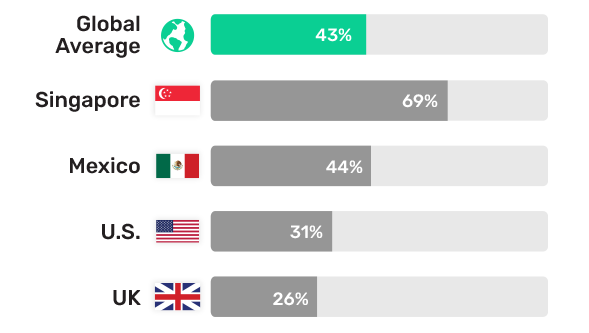

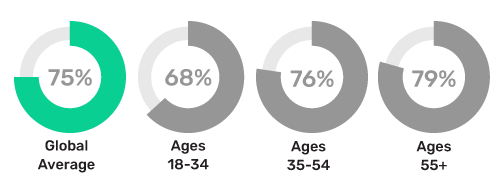

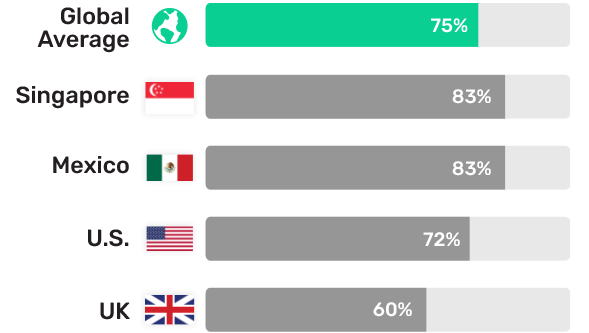

72% of consumers worry about being fooled by a deepfake

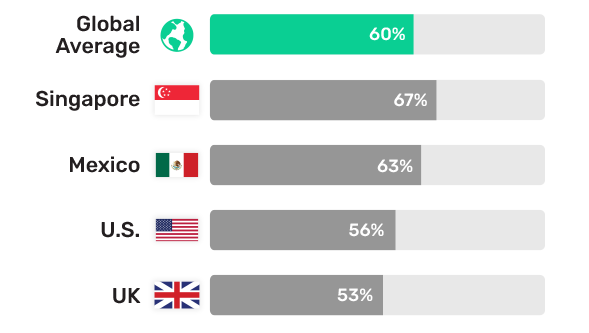

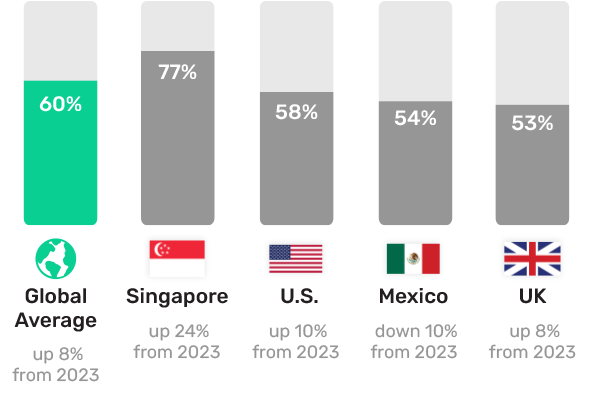

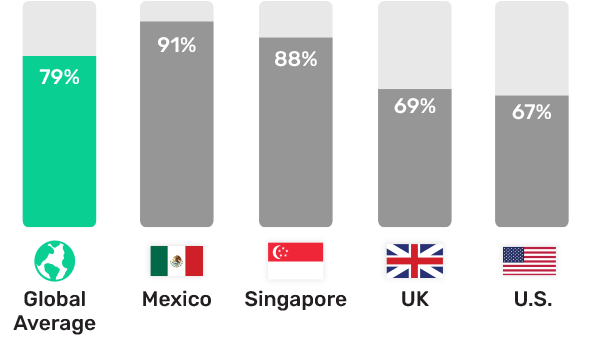

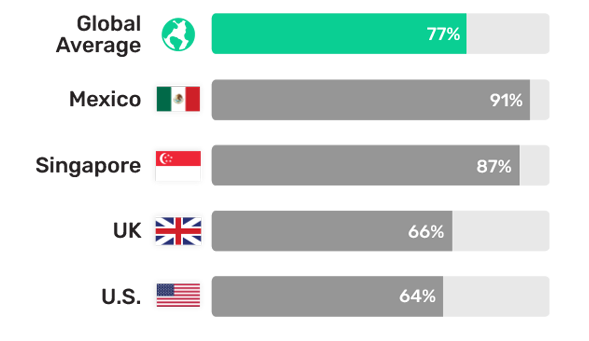

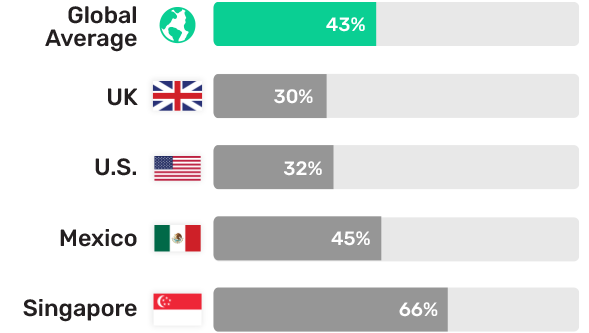

79% of consumers worry about online data breaches, 77% worry about their accounts being hacked

News

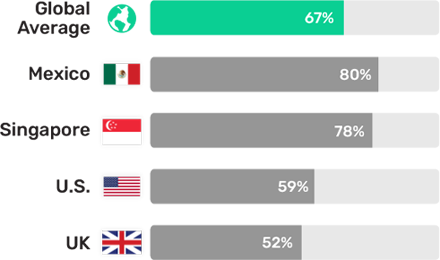

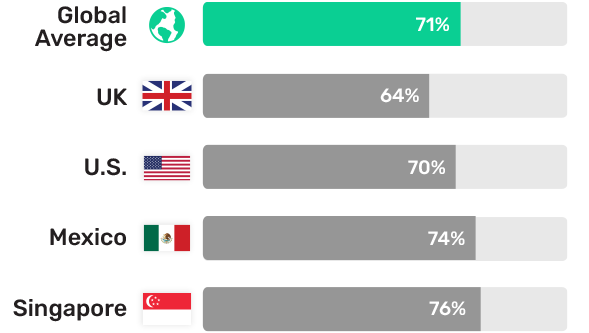

Global survey shows 83% of Singapore consumers worry deepfakes will influence next election

Global survey shows 72% of Americans worry deepfakes will influence upcoming elections

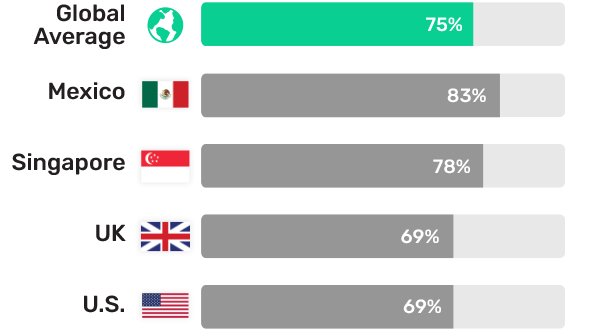

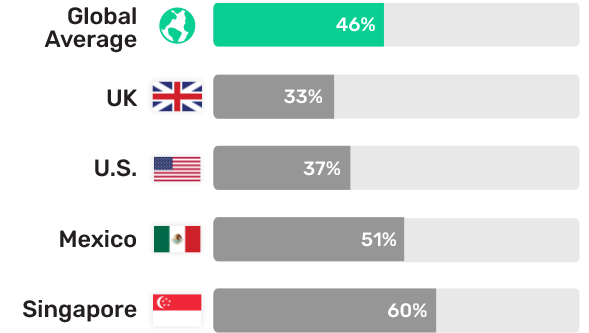

Global survey finds 75% of consumers ready to switch banks over inadequate fraud protection

Global survey shows 72% of consumers worry about being fooled by deepfakes on daily basis